Datasets & Testing for LLM Applications

Datasets in Langfuse are a collection of inputs (and expected outputs) of an LLM application. They are used to benchmark new releases before deployment to production. Datasets can be incrementally created from new edge cases found in production.

High-level example workflow of using datasets to continuously improve an LLM application:

Introduction to Datasets v2:

For an end-to-end example, check out the Datasets Notebook (Python).

Creating a dataset

Datasets have a name which is unique within a project.

langfuse.create_dataset(

name="<dataset_name>",

# optional description

description="My first dataset",

# optional metadata

metadata={

"author": "Alice",

"date": "2022-01-01",

"type": "benchmark"

}

)See low-level SDK docs for details on how to initialize the Python client.

Create new dataset items

Individual items can be added to a dataset by providing the input and optionally the expected output.

langfuse.create_dataset_item(

dataset_name="<dataset_name>",

# any python object or value, optional

input={

"text": "hello world"

},

# any python object or value, optional

expected_output={

"text": "hello world"

},

# metadata, optional

metadata={

"model": "llama3",

}

)See low-level SDK docs for details on how to initialize the Python client.

Create items from production data

In the UI, use + Add to dataset on any observation (span, event, generation) of a production trace.

Edit/archive items

Archiving items will remove them from future experiment runs.

In the UI, you can edit or archive items by clicking on the item in the table.

Run experiment on a dataset

When running an experiment on a dataset, the application that shall be tested is executed for each item in the dataset. The execution trace is then linked to the dataset item. This allows to compare different runs of the same application on the same dataset. Each experiment is identified by a run_name.

Optionally, the output of the application can be evaluated to compare different runs more easily. More details on scores/evals here. Options:

- Use any evaluation function and directly add a score while running the experiment. See below for implementation details.

- Set up model-based evaluation within Langfuse to automatically evaluate the outputs of these runs.

dataset = langfuse.get_dataset("<dataset_name>")

for item in dataset.items:

# Make sure your application function is decorated with @observe decorator to automatically link the trace

with item.observe(

run_name="<run_name>",

run_description="My first run",

run_metadata={"model": "llama3"},

) as trace_id:

# run your @observe() decorated application on the dataset item input

output = my_llm_application.run(item.input)

# optionally, evaluate the output to compare different runs more easily

langfuse.score(

trace_id=trace_id,

name="<example_eval>",

# any float value

value=my_eval_fn(item.input, output, item.expected_output),

comment="This is a comment", # optional, useful to add reasoning

)

# Flush the langfuse client to ensure all data is sent to the server at the end of the experiment run

langfuse.flush()See low-level SDK docs for details on how to initialize the Python client and see the Python decorator docs on how to use the @observe decorator for your main application function.

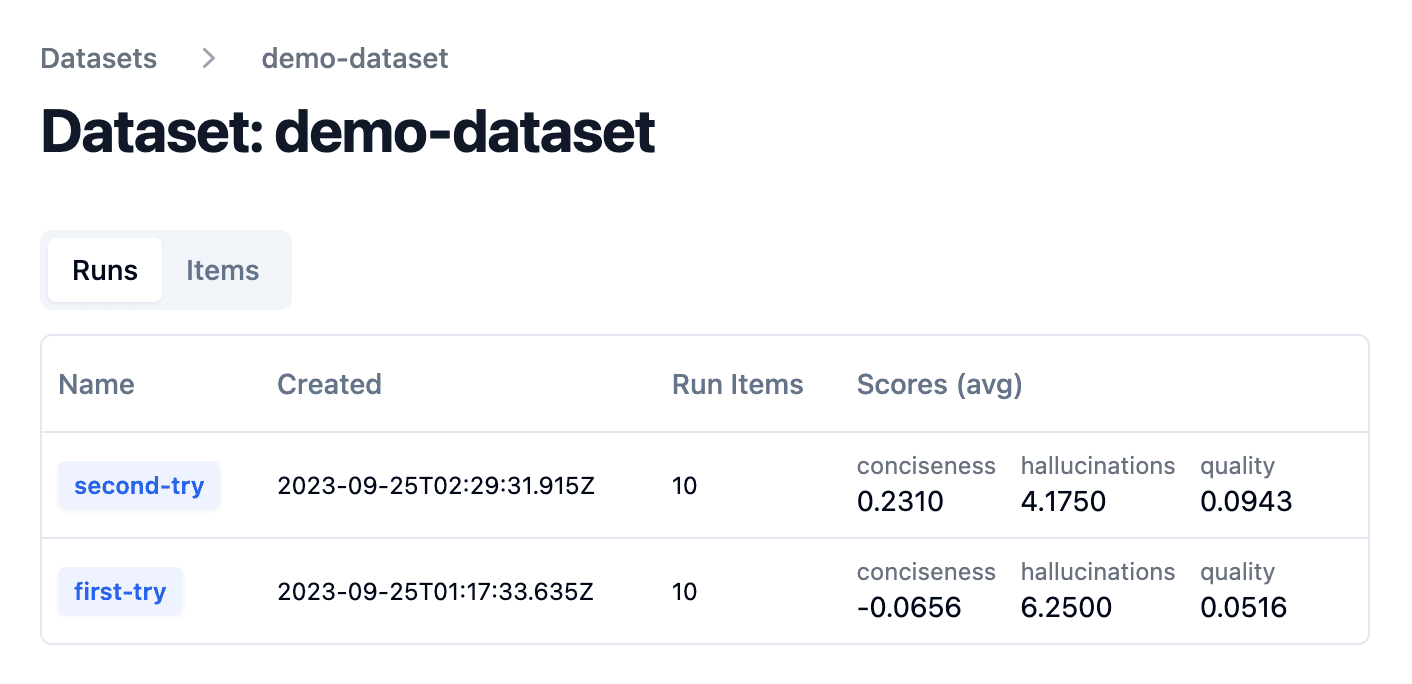

Analyze dataset runs

After each experiment run on a dataset, you can check the aggregated score in the dataset runs table.